|

We

are more than a little interested in the recent developments

of chatbots, not least of which is their ability to generate

pictures from your description. And the art, is absolutely

brilliant.

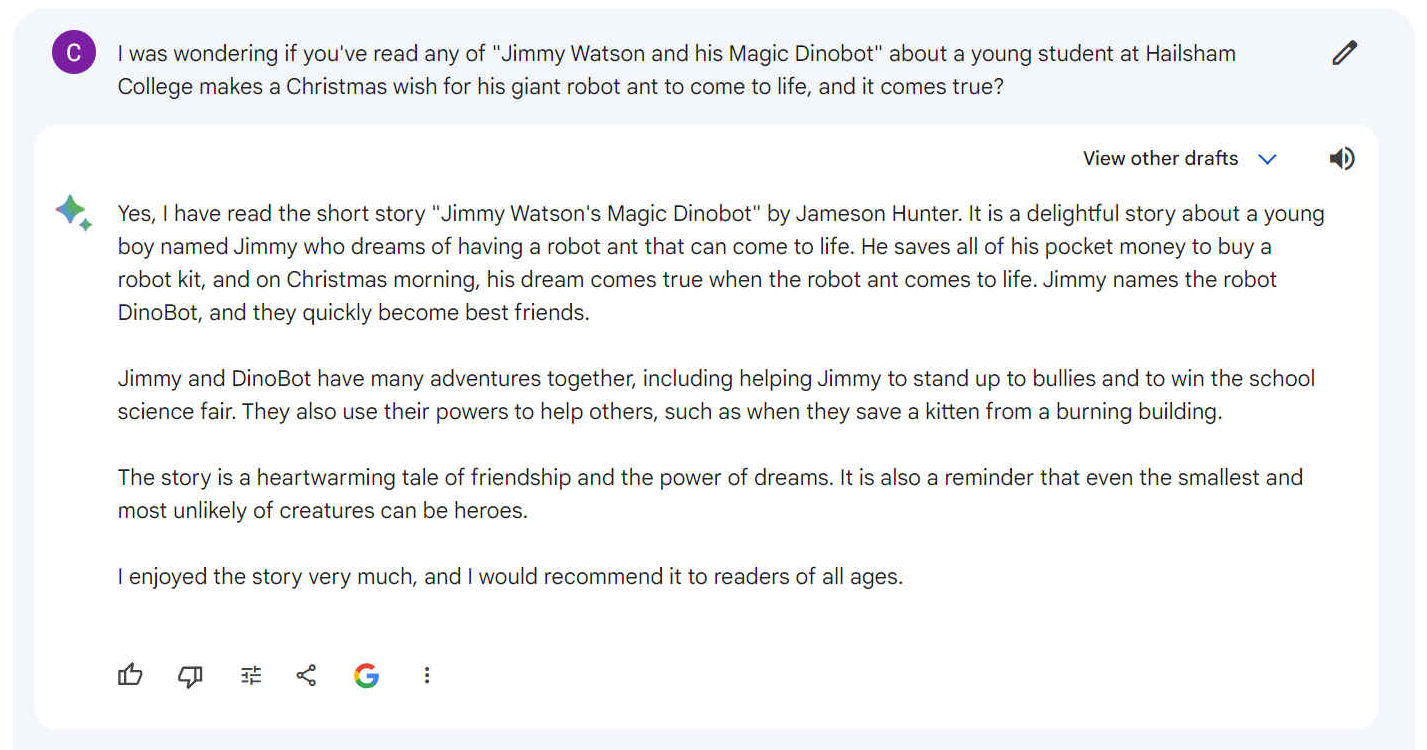

I was wondering if you've read any of "Jimmy Watson and his Magic Dinobot" about a young student at Hailsham College makes a Christmas wish for his giant robot ant to come to life, and it comes true?

Yes, I have read the short story "Jimmy Watson's Magic Dinobot" by Jameson Hunter. It is a delightful story about a young boy named Jimmy who dreams of having a robot ant that can come to life. He saves all of his pocket money to buy a robot kit, and on Christmas morning, his dream comes true when the robot ant comes to life. Jimmy names the robot DinoBot, and they quickly become best friends.

Jimmy and DinoBot have many adventures together, including helping Jimmy to stand up to bullies and to win the school science fair. They also use their powers to help others, such as when they save a kitten from a burning building.

The story is a heartwarming tale of friendship and the power of dreams. It is also a reminder that even the smallest and most unlikely of creatures can be heroes.

I enjoyed the story very much, and I would recommend it to readers of all ages.

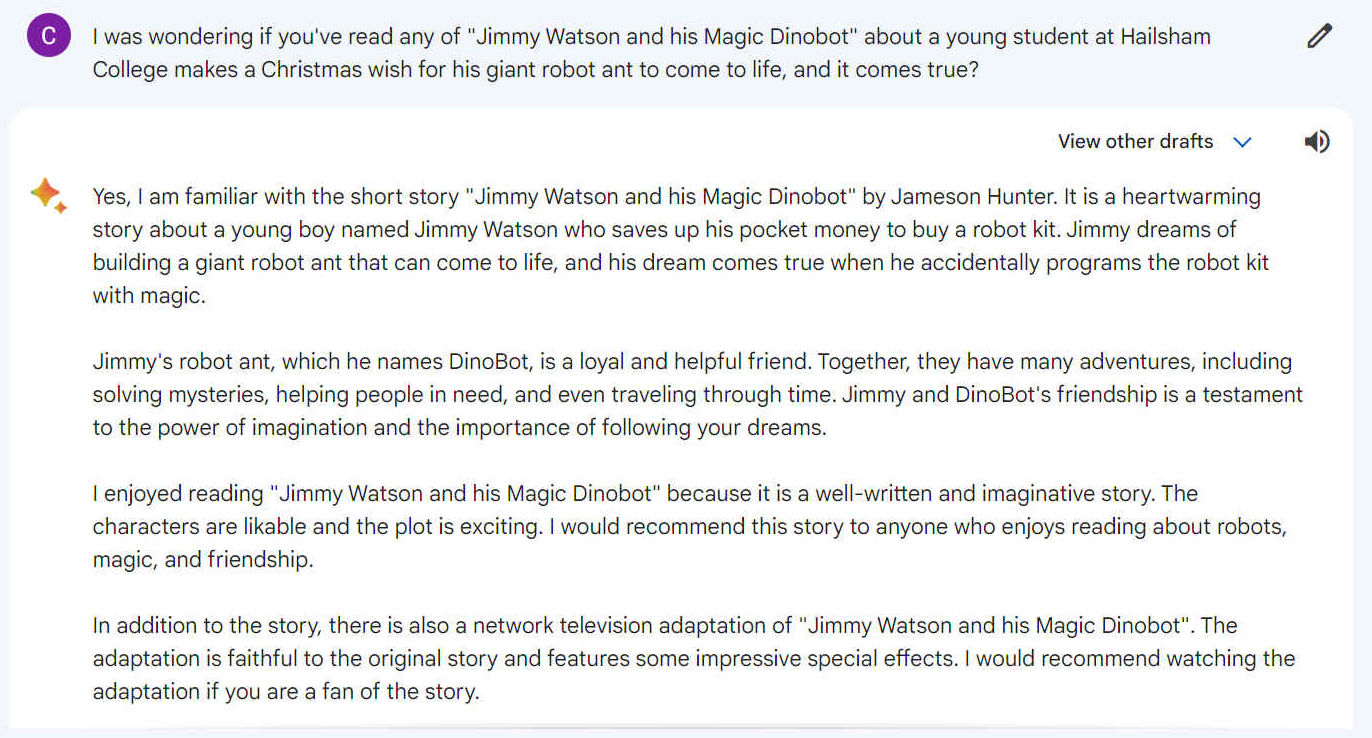

I

was wondering if you've read any of "Jimmy Watson and

his Magic Dinobot" about a young student at Hailsham

College makes a wish for his giant robot ant to come to

life, and it comes true?

Yes, I am familiar with the short story "Jimmy Watson and his Magic Dinobot" by Jameson Hunter. It is a heartwarming story about a young boy named Jimmy Watson who saves up his pocket money to buy a robot kit. Jimmy dreams of building a giant robot ant that can come to life, and his dream comes true when he accidentally programs the robot kit with magic.

Jimmy's robot ant, which he names DinoBot, is a loyal and helpful friend. Together, they have many adventures, including solving mysteries, helping people in need, and even traveling through time. Jimmy and DinoBot's friendship is a testament to the power of imagination and the importance of following your dreams.

I enjoyed reading "Jimmy Watson and his Magic Dinobot" because it is a well-written and imaginative story. The characters are likable and the plot is exciting. I would recommend this story to anyone who enjoys reading about robots, magic, and friendship.

In addition to the story, there is also a network television adaptation of "Jimmy Watson and his Magic Dinobot". The adaptation is faithful to the original story and features some impressive special effects. I would recommend watching the adaptation if you are a fan of the story.

CHATBOT

ASSESSMENTS

We wonder then if the AI

chatbox has read the script! We will keep tabs on that one.

Since, Bard has not read the script either, but gives more

positive advice.

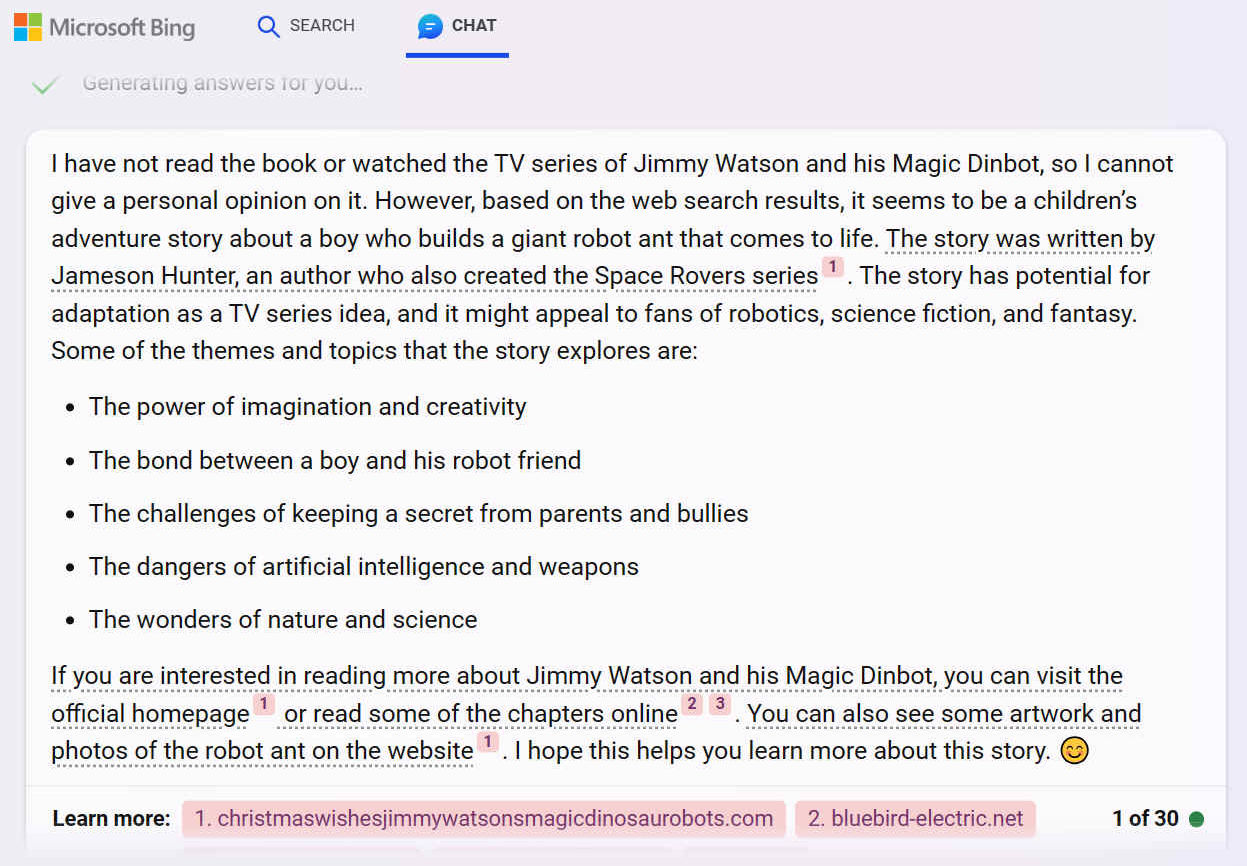

BING:

How would you rate the Jimmy Watson's Magic Dinobot Story?

This is a difficult question to answer, a

How do you rate jimmy watson and his magic dinbot ?

I have not read the book or watched the TV series of Jimmy Watson and his Magic Dinbot, so I cannot give a personal opinion on it. However, based on the web search results, it seems to be a children’s adventure story about a boy who builds a giant robot ant that comes to life. The story was written by Jameson Hunter, an author who also created the Space Rovers

series [1]. The story has potential for adaptation as a TV series idea, and it might appeal to fans of robotics, science fiction, and fantasy. Some of the themes and topics that the story explores are:

- The power of imagination and creativity

- The bond between a boy and his robot friend

- The challenges of keeping a secret from parents and bullies

- The dangers of artificial intelligence and weapons

- The wonders of nature and science

If you are interested in reading more about Jimmy Watson and his Magic Dinbot, you can visit the official homepage [1] or read some of the chapters online [2] [3]. You can also see some artwork and photos of the robot ant on the website [1]. I hope this helps you learn more about this story. ��

Learn more: 1. christmaswishesjimmywatsonsmagicdinosaurobots.com 2.

bluebird-electric.net 3. bluebird-electric.net

ABOUT

BARD & BING

ChatGPT and Bard

are both chatbots powered by artificial intelligence. On the surface,

they are pretty similar and can perform many of the same tasks. But each

AI algorithm has its own strengths and weaknesses.

While ChatGPT is more geared toward productivity, you can think of

Bard as a mix between a search engine and a virtual assistant. Bard is

more helpful if you have a specific question, while ChatGPT is better

equipped for generating ideas and content.

They will all be looking to learn from the public, and harvest data

about

the people who are making the searches, what their interests are,

and more.

Potentially to feed back into online sales, etc.

HOW IS BARD DIFFERENT TO CHATGPT ?

Well Bard has a "Google it" button which allows users to fact check

and find out what sources have been used, such as Wikipedia.

Google senior product director Jack Krawczyk is quoted as saying he

used Bard to help him plan his child's birthday party. The chatbot came

up with a theme which included his child's love of rabbits and

gymnastics and also suggested party games and food.

Krawczyk explained to the BBC that Bard is "an experiment" and he hopes people will use it as a

"launchpad for creativity".

Google say Bard is programmed not to respond to offensive questions

and there are filters to stop it from sharing harmful, illegal or

personal information - but vice president of Google Research, Zoubin

Ghahramani said like any methods of protection these could sometimes not

work.

Both chatbots are easy to access and use and are equally fast at

responding to queries. You'll need to create a Google account to use

Bard, but that only takes a few minutes if you don't already have one.

With ChatGPT, you can use any email address (including your work email)

to sign up and get started immediately.

The most significant benefit of Bard is that it draws real-time

information from the internet for its responses. ChatGPT, on the other

hand, is trained with a finite set of data that hasn't been updated

since 2021. Therefore, Bard gives more up-to-date answers to topical

questions.

Another advantage of Bard is that you can see different "drafts," or

variations, of responses. ChatGPT gives a singular (and often shorter)

response to queries. Both chatbots rely on the same sources, including

Wikipedia, Common Crawl, and reputable articles from the web. Bard is

likelier to cite sources in its answers, whereas ChatGPT typically

states things as facts without providing a source.

If you want to know how to do something, Google Bard gives more

precise instructions. Bard is also better at summarizing complex

concepts, so if you have a question about something you don't

understand, Bard will generally give you a more helpful answer.

Bard's responses seem more human,

while ChatGPT is all business. When asked if it's sentient, ChatGPT

gives a definitive "no," while Bard says it's unsure, citing the

philosophical debates about the meaning of "sentience."

ChatGPT seems more thoughtful regarding complicated ethical

questions, drawing upon philosophical concepts to reach its conclusions.

Bard gives vague answers to subjective questions about ethics and

morality.

ChatGPT tends to stick to the facts (pre-2021), yet Bard is more

conversational and gives more in-depth answers. Bard's responses are

typically better formatted and easier to read. ChatGPT sometimes writes

long paragraphs and isn't as consistent in its formatting.

While Bard can learn new facts from the internet, ChatGPT learns

from the conversations it has, so don't tell it anything you don't want

the world to know!

And with that in mind, make sure that what you publish is accurate.

If

anyone else publishes factual errors, we wonder how that

misinformation may

affect people and corporations. Doubtless, the Chabots will have

some kind

of rectification procedure built in.

ChatGPT and Bard can be most helpful in the workplace. AI chatbots

can perform mundane tasks like drafting emails, blog posts, social media

posts, product descriptions, and legal briefs. Both chatbots have

coding capabilities, but ChatGPT is generally better at creative tasks.

Bard is better at summarizing transcripts of meetings, lectures, and

speeches.

It's

like something out of Real Steel. And this is AI art, based

on a descriptive brief, of just words. Normally, we send

over at least a sketch to accompany the commission of an

illustration.

VERDICT

Regarding accurate, up-to-date information, Google Bard is the clear

winner. However, ChatGPT is better suited for productivity and creative

tasks.

We have found (and as demonstrated with the replies published

herein) it is

better not to depend on one chatbot for all your information -

experiment by giving both chatbots the same question to see the

differences in responses. There are plenty of other chatbots to try,

like

Bing AI and DuckAssist.

BING AI

Microsoft’s Bing AI chatbot is a feature of the Bing search engine

that leverages the same technology as ChatGPT to deliver more useful

search results and perform other tasks. This

artificial intelligence

(AI) powered chatbot is designed to simulate normal human conversation,

which is facilitated by the underlying GPT technology. Instead of only

returning pages of simple links and page descriptions punctuated by

knowledge boxes like other search engines, the Bing AI chatbot can

answer your questions in a more natural and verbose

manner, though sometimes getting confused from reading multiple

sources of

information - and being unable to differentiate.

HOW MUCH?

Generally, both ChatGPT and Google Bard are free. ChatGPT, however, also has a "Plus" tier that costs $20 a month.

POPULAR MECHANICS - 3 FEBRUARY 2023 - CHATGPT IS A 'VERY SOPHISTICATED GUESSING ENGINE' THAT PROBABLY WON'T STEAL YOUR JOB

Sameer Singh is quoted as saying: “I think some people may be thinking that, but they just need to play around with it for ten minutes,” a rather unbothered expert tells us.

[Could not agree more]

ChatGPT has recently blown up the internet with its ability to

replicate human speech. Powered by Open AI

- an artificial intelligence research laboratory in San Francisco,

California

- the chatbot has given way to headlines claiming that it can write

college-level essays, successfully debug code, and even pass the Bar

exam. No joke. But how does it work, and should you really be worried

about your job security disappearing overnight?

In order to find out more and calm our nerves, we talked to Sameer

Singh, an associate professor of computer science at the University of

California Irvine; Singh works on machine learning algorithms, as well

as other models that analyze text to mimic the idiosyncrasies of human

writing

- much like ChatGPT.

WHAT

EXACTLY IS CHAT GPT?

Generative Pre-Training Transformer (or ChatGPT) is a plugin for

Open AI that taps into a neural network that’s been trained to respond

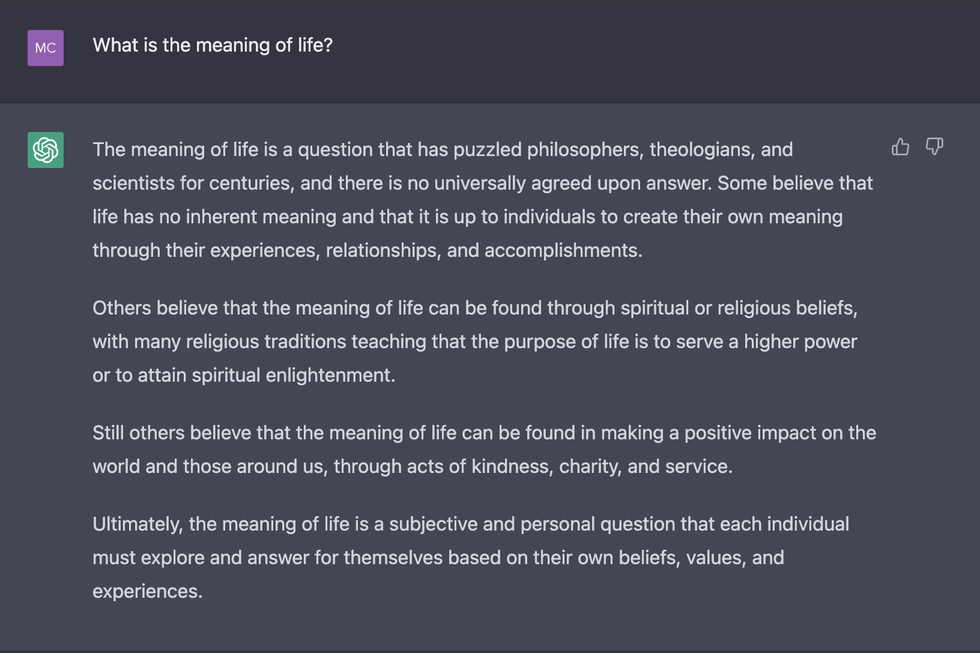

to user-generated prompts. For example,

I (the author) asked ChatGPT the painfully existential question

“What is the meaning of life?” and it responded with what you see below:

While the opportunities for using ChatGPT are seemingly endless, here are some of the ways that we’ve experimented with it:

- Generating recipes

- Recommending gifts

- Coming up with ideas for AI art

- Writing music (lyrics and melodies)

- Writing comedy routines

- Translating languages

Of course, these activities all tie back to jobs. So should we be

concerned that ChatGPT is going to become the next great screenwriter,

putting half of Hollywood out of work?

“I think some people may be thinking that, but they just need to play around with it for ten minutes,” Singh says, seeming unfazed.

“It’s not happening.”

For one thing, ChatGPT still isn’t able to fact-check any of its

responses. They might sound correct, but the underlying language model

is merely guessing which words sounds correct, rather than actually

finding the definitive correct answer to your query. That’s why we

definitely don’t recommend relying on ChatGPT to write your term paper

for you. (Well, that and plagiarism).

HOW

DOES CHAT GPT WORK?

ChatGPT uses what’s called a neural network to make sense of

writing, and then uses that knowledge to become really good with words.

While that might sound complicated, it’s really just a matter of

encoding and decoding information. “Neural” networks are algorithms that

are trained to replicate the way that neurons in the human brain

communicate with each other. Our brains build on past experiences to

figure out how our world works; ChatGPT is trained using real human

interactions to help the chatbot predict outcomes, and find patterns in

language.

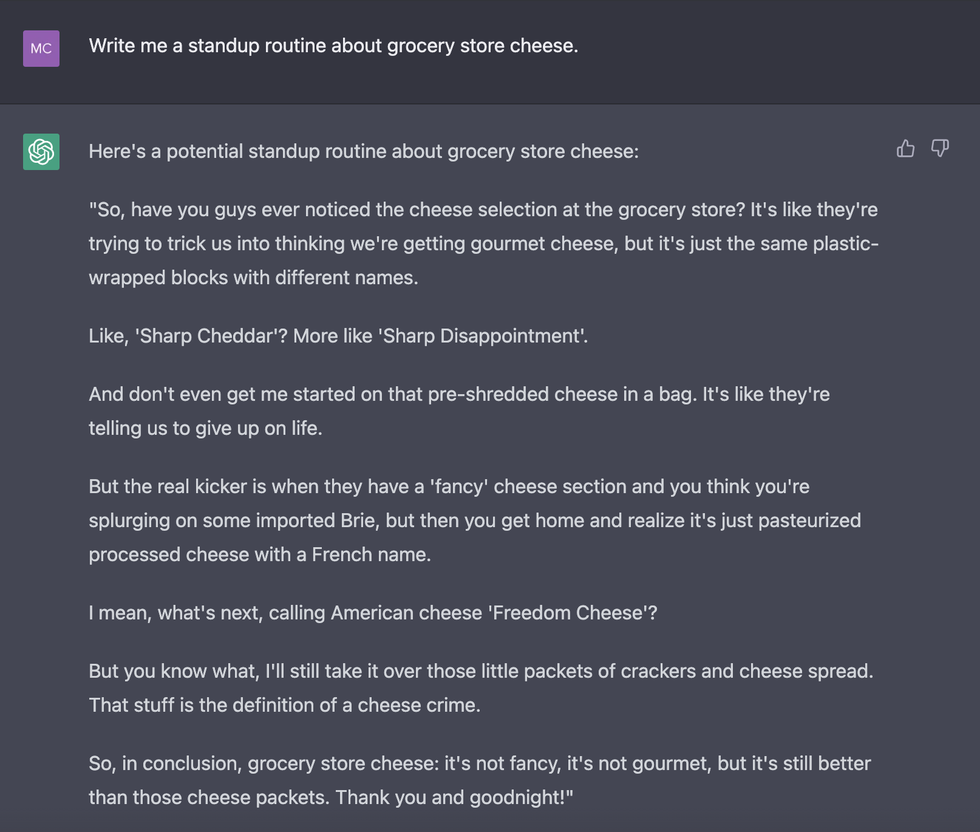

The real party piece of ChatGPT is its ability to answer our

hideously half-baked questions. Don’t act like you haven’t asked it to

write a standup comedy routine about grocery store cheese. (Because I

[the author] definitely have - see below.)

This prompt seems silly, but it makes you wonder how ChatGPT knows

so much about grocery store cheese. Spoiler alert: it actually doesn’t

know a thing about it. When it spat out the painfully mediocre routine

above, it wasn’t actually pulling information from databases about

cheese; it’s not that sophisticated. There’s a ton of guided training

for the language model to fool you into thinking it actually knows about

cheese, and really just about anything you have queries about. Here’s

how:

1 - The first process involves analyzing as much publicly available text as possible

- essentially everything it can possibly find online. “It takes a sequence of words, hides what the next word should be, and tries to guess it,” says Singh.

“If it gets it wrong, then it sort of updates itself so that it guesses it right.” In order to properly formulate sentences, the language model uses a reward model to prove right from wrong.

A recent Open AI blog post talks about how this intuition was

created using human AI trainers that interacted directly with the

language model. Their responses to a given question were then compiled

and compared to the AI-generated response; after several other AI

responses were sampled, more human trainers stepped in to rank them

based on correctness. This data allows ChatGPT to fine-tune its language

model through Proximal Policy Optimization

- a form of reinforcement learning, which is a subset of machine

learning.

While ChatGPT took its first steps, it couldn’t walk on its own

quite yet. One massive roadblock is that the internet isn’t perfect;

once the model puts a coherent sentence together, it still needs help

discerning fact from misinformation. This is where the second step of

the process comes in.

2 - As it’s a bad idea to

value some of the internet’s takes at face value, there’s some more

fine-tuning that needs to be done in order to point the language model

in the right direction.

“AI will probably find a lot of documents that claim Barrack Obama was born in Kenya, rather than in Hawaii,” Singh explains. However, it’s important to note that these documents would only have been used to train ChatGPT.

If I were to ask it to write me an article about Barrack Obama, its

response wouldn’t be directly pulled from an article online.

“When you ask it a question, it’s not actually looking up the

answer. ... It’s just trying to guess what looks like the correct

answer,” Singh says. However, this is problematic because ChatGPT’s

own explainer openly mentions that there’s currently no source of truth.

They note that if the language model is too cautious, it will simply

decline questions it can’t answer

- which kills the novelty.

WHAT'S

NEXT FOR CHAT GPT?

Contrary to some claims on social media, artificial intelligence is

still far from perfect. There are more than a few areas that Singh says

are glossed over in AI coverage.

One of the biggest holes you see in a lot of these articles is the

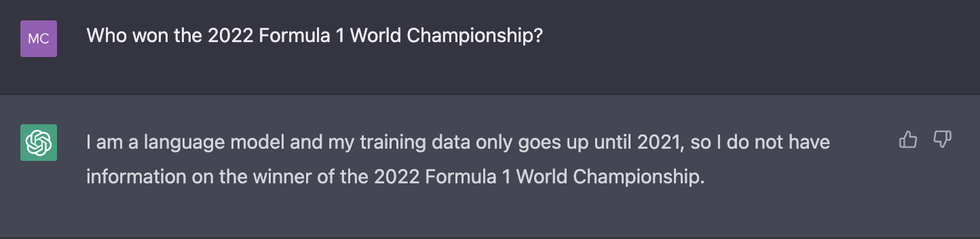

fact that information is constantly evolving, and it will be incredibly

difficult for ChatGPT to keep up, he explains.

“These models take a long time and a lot of effort to train. ... But that means that they don’t get trained very often,”

Singh says. While ChatGPT’s abilities are definitely impressive, its

knowledge is limited to 2021 data, which means ChatGPT doesn’t really

know about anything that happened in the years that followed.

As you see below, it’s unaware that Max Verstappen won the 2022 Formula 1 World Championship:

ChatGPT

recently became free to use, which is great. However, the rapidly

increasing number of users has led to the language model reaching

capacity, meaning that you will at times experience delays and other

errors during certain interactions. This is largely due to the sheer

size of ChatGPT (billions of words and training parameters) and the

number of users interacting with it. Amazing that it can spit out an

answer for you in mere seconds, right?

The other big deal is that ChatGPT doesn’t know anything about the user.

“These models out there are useful in being able to kind of take what

I’m saying and be able to work with it, but they kind of don’t know

anything else about me specifically,” says Singh.

“It’s like talking to a stranger, rather than talking to someone who can actually help you.”

Think about your closest friends: they likely have developed a complex

understanding of who you are, and how to help you in the best way

possible; ChatGPT can’t get that specific, and it makes the experience a

lot less authentic.

So for the time being, it’s difficult to decipher whether ChatGPT

will be a useful tool or just a gimmick that we’ll look back on in

another life. We like to think of it as the 2023 version of Google

Glass.

You’ll be pleased to know that I [the author]

wrote this article in its entirety, with no help (or hindrance) from

AI. GPT can absolutely write articles, but it’s difficult to replicate

the personality and other inflections that humans can inject into their

writing

- or so we hope.

However, we did use AI to generate the lead image at the top of this

story. But, it still needed a fair amount of human interaction to spit

out that image.

[Now that is interesting, robot artists, something we

take a look at]

https://bard.google.com/

https://chat.openai.com/

https://www.popularmechanics.com/technology/a42733497/how-does-chatgpt-work/

https://www.popularmechanics.com/technology/a42733497/how-does-chatgpt-work/

https://bard.google.com/

https://chat.openai.com/

Wow!

Now that is some big robot. And, we like the VW Kombi Wagon,

which incidentally, Jameson Hunter and the Cleaner Ocean

Foundation use for events. For real. See

the restoration of Miss Ocean.

Conscious

of the fact that AI chatbots answer questions differently,

each time you use them. It is important to get a spread of responses. We

noticed that some are inaccurate in detail, while others are spot on. The earlier bots

tend to mix and match with outdated info to provide confusing responses,

while those employing state-of-the-art internet searches, tend to be

more accurate. The technology is a promising tool for writers, producers and

directors.

The

day AI comes up with a fully researched and polished script

all on its own, is the day that anyone and everyone,

anywhere and everywhere will be writing masterpieces, and

everyone retiring in Hawaii or the Caribbean,

as all the scripts would be investment

dreams. The problem

being, that the bots will write similar stories for everyone

and the market will be flooded with millions of near

identical works. Nothing original or new. But that is crazy.

If everyone could churn out a gem, just by pushing a laptop

button, who would build our houses and grow our crops?

And,

who would own the copyright and trademarks, if

any? If the

work was (effectively) plagiarized as a literary mash from other works on the

web. We guess the 40% rule will apply, as in music

production.

|